7 Great SaaS MVP Examples (and What Founders Can Learn From Them)

In SaaS, your Minimum Viable Product (MVP) isn’t meant to be perfect; it’s meant to be proven. An MVP is the leanest version of your product that...

9 min read

Written by Keith Shields, Mar 25, 2026

Minimum Viable Products (MVPs) are supposed to be lean, fast, and focused. They exist to help founders validate ideas quickly and learn from real users before investing heavily in development. In reality, many MVPs drift far from that original goal.

Founders often add too many features, build based on assumptions, or launch without clear success metrics. What starts as a “minimum” product slowly turns into a small version of the final vision: expensive, slow to build, and difficult to learn from.

In competitive markets where startups operate on a limited runway, those mistakes are costly. The longer it takes to reach real users, the longer it takes to discover whether the idea actually works.

Understanding the most common and sometimes overlooked MVP mistakes can help founders move faster, reduce risk, and focus on what MVPs are actually meant to do: validate ideas through real-world feedback.

The concept of the MVP became popular through the Lean Startup methodology introduced by Eric Ries. The idea is simple: build the smallest version of a product that delivers value while testing a core assumption.

An MVP is not meant to be polished, feature-rich, or complete. Its purpose is learning.

It’s also important to distinguish MVPs from other early-stage product artifacts:

The key difference is that an MVP tests market assumptions, not just usability or design. It helps answer questions like:

An MVP that answers those questions quickly is far more valuable than one that simply looks impressive.

|

Artifact |

Primary Goal |

Fidelity |

Best For |

|

Prototype |

UX/UI Validation |

Low to medium |

Testing flow & design before code. |

|

Beta Version |

Market Validation |

Functionality |

Testing core value & willingness to pay. |

|

MVP |

Technical Stability |

High |

Finding bugs & scaling performance. |

One of the most common traps is turning the MVP into a “small version of the final product.” This often happens because founders worry that users will judge the product too harshly if it feels incomplete. The instinct is understandable; no one wants to launch something that feels unfinished.

But overbuilding creates the opposite problem. It slows development, increases costs, and introduces unnecessary complexity. Instead of testing one idea, the product ends up testing many at once, making feedback harder to interpret.

It also introduces technical debt, the implied cost of additional rework caused by choosing an easy solution now instead of a better approach that would take longer. When teams rush to pack "nice-to-have" features into an early release, they often create "spaghetti code," where secondary features become so tangled with the core engine that the entire codebase becomes difficult to maintain or pivot.

Instead of testing one clean hypothesis, a bloated product ends up testing too many things at once, making user feedback muddy and hard to interpret. A better approach is ruthless prioritization. Frameworks like the MoSCoW method (Must-have, Should-have, Could-have, Won’t-have) or the Kano model help teams identify which features are essential for validating the core value proposition.

Ultimately, an MVP should solve one important problem exceptionally well, rather than solving several problems partially through a cluttered, high-debt interface.

"You should never aim for a perfect MVP; the primary objective at this stage is to validate the product and its market viability as quickly as possible. By identifying only the fundamental features needed for user testing, you create the space to fail fast, test your hypotheses, and refine your roadmap. At Designli, we focus on delivering an MVP that meets rigorous testing criteria, guaranteeing technical quality while prioritizing the validation that is key to long-term success."

- Carlos Zabala, Product Delivery Manager at Designli

Many MVPs fail long before a single line of code is written. Founders often assume they understand the user problem because they personally experience it, a phenomenon known as confirmation bias. While a founder’s intuition is a starting point, building based solely on assumptions is the fastest way to create a solution for a "ghost problem."

Products frequently fail not because the features are poorly designed, but because the underlying problem isn't urgent enough to warrant a change in user behavior. In the software world, this is the difference between a "vitamin" (nice to have) and a "painkiller" (essential). Without validation, you risk wasting your development runway on a product that no one is looking for.

The most effective way to mitigate this risk is through a rigorous user discovery phase. Even five to ten structured interviews can reveal how potential users currently solve the problem, what technical friction they face, and whether they have a budget for a new solution.

Launching an MVP is only the beginning of the product lifecycle. The true value of an early release isn't the initial revenue; it's the data. A common mistake is relying on vanity metric signals like total page views or "free" signups that look encouraging on a slide deck but fail to correlate with long-term success.

These surface-level numbers often hide deeper issues regarding user retention and churn. If 1,000 people sign up but only five return the next day, your MVP hasn't validated the product; it has only validated your marketing. Real feedback requires a balance of qualitative insights (the "why") and quantitative data (the "what").

Understanding user behavior requires looking beyond stated feedback. Evidence-based roadmaps are built by observing how people actually interact with the product through structured observation tools, such as

Another subtle but expensive mistake is prioritizing features based on what feels exciting or "innovative" rather than what solves the core problem. In a startup environment, every hour spent on an interesting but non-essential feature carries a high opportunity cost, draining the limited time and budget required to perfect the product's primary function.

Teams often fall into the trap of adding "bells and whistles" because they seem technically impressive or follow a current industry trend. Over time, these additions dilute the focus of the MVP, leading to feature bloat. This not only confuses the user but also creates a heavier codebase that is slower to pivot when real data arrives.

To maintain discipline, we recommend the Jobs-to-be-Done (JTBD) framework. Instead of asking what features users want, the focus shifts to what task the user is "hiring" your product to accomplish.

By maintaining this focus, you ensure that your development resources stay strictly aligned with the product’s value proposition.

Perhaps the most overlooked mistake is launching an MVP without a clear measurement strategy. Some teams release their product and simply wait to see what happens, but "waiting for a signal" isn't a strategy; it’s a gamble. Without predefined success metrics, it becomes impossible to determine if the MVP is a foundation for growth or a candidate for a pivot.

Before the first line of code is deployed, teams must define what Product-Market Fit (PMF) indicators look like. While general engagement is fine, high-authority teams focus on actionable metrics over vanity stats:

To turn an MVP into a true experiment, you need a robust data schema. Instead of just tracking generic clicks, use a structured event-tracking tool like Segment, Mixpanel, or Amplitude. This allows you to map out the user journey and identify exactly where friction occurs.

An MVP is not a finished product; it is a live experiment. When these metrics are tracked from day one, they create an automated feedback loop that informs your post-launch roadmap. By treating your launch as a data-gathering exercise, you ensure that your next iteration is based on evidence rather than intuition.

In the current landscape of AI-assisted development, a new trap has emerged: the "vibe-coded" MVP. With the rise of LLMs and no-code AI builders, it has never been easier to generate a functional interface or a "wrapper" that mimics a sophisticated application.

However, many founders mistake a successful Proof of Concept (PoC) for a scalable product. While "vibe coding" is excellent for rapid prototyping and front-end experimentation, it often lacks the underlying system architecture required to handle real-world complexity.

The danger of a vibe-coded MVP is that it often lacks a "source of truth" for data, robust security protocols, or an efficient API layer. What works for ten users in a demo will often collapse under the weight of 1,000 users because the "glue" holding the app together consists of brittle, AI-generated snippets rather than a cohesive, engineered framework.

Use AI to accelerate your workflow, not to replace your software blueprint. Ensure your MVP has a clear architectural roadmap so that when you move beyond the "vibe" stage, your foundation is ready to scale.

Whether your MVP budget is large or limited, disciplined product practices still determine the outcome. Clear validation goals, structured development, and focused execution help ensure the product generates meaningful results.

|

Phase |

Action Item |

Success Signal |

|

Discovery |

Validate the Problem via 10+ Structured Interviews |

You can articulate the "pain" without mentioning your solution. |

|

Definition |

Map the 'Job-to-Be-Done' & Critical Path |

The user journey has 3 steps or fewer to reach value. |

|

Architecture |

Select a Scalable Tech Stack (Avoid the "Vibe" Trap) |

Your MVP foundation can handle a 10x user spike. |

|

Prioritization |

Apply MoSCoW / ICE Scoring to Features |

60% of your initial "ideas" are moved to Version 2.0. |

|

Deployment |

Launch to a 'Closed Alpha' Group |

You get high-quality, qualitative feedback from early adopters. |

|

Measurement |

Audit 'Aha! Moment' via Behavioral Analytics |

You see exactly where users drop off in the funnel. |

|

Iteration |

Data-Informed Refactoring |

Your roadmap is based on user logs, not gut feelings. |

Many MVP mistakes happen because the process leading up to development is unclear. When product strategy, user validation, and feature prioritization aren’t structured properly, teams naturally drift toward overbuilding, misaligned features, or launching without meaningful feedback loops.

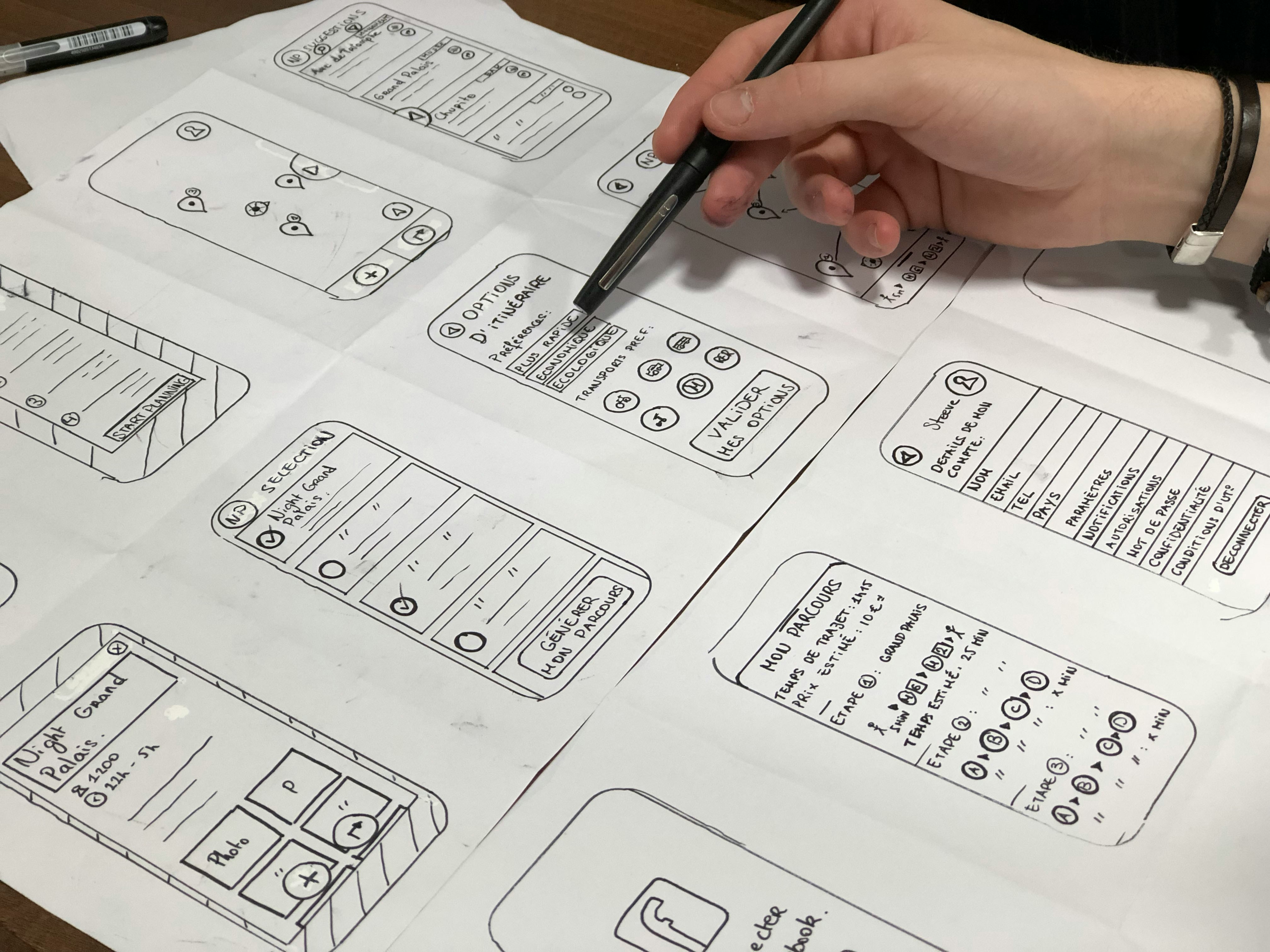

At Designli, we eliminate those risks before development begins. The process starts with the SolutionLab, a 2-week design sprint. During this phase, founders work with a multidisciplinary team to clarify the core problem, identify the primary user journey, and define the smallest feature set capable of delivering real value. We develop an interactive prototype that visually simulates how the product will function. This allows founders to test assumptions and gather feedback from early users before writing a single line of code.

Once the roadmap is defined, development moves into Designli Engine, where a dedicated custom team builds the MVP with precision and speed. After the work is done with the SolutionLab, the team can focus on execution. This structured approach keeps the product lean, ensures feature prioritization remains aligned with the core user problem, and creates clear metrics for measuring success once the MVP launches.

By combining early validation, structured planning, and focused execution, Designli helps founders avoid the most common MVP development mistakes

If you have already validated your idea with AI tools and seek feedback, we recommend our Engineering Intensive, a two-week deep technical assessment designed for products built quickly with AI or “vibe-coding” tools. Our senior engineers perform a full codebase audit, security and vulnerability testing, and load and stress testing to uncover hidden risks in rapidly generated software.

Using industry-standard analysis tools and hands-on engineering expertise, we identify technical debt, architectural weaknesses, and performance limits. You’ll receive a clear roadmap outlining how to stabilize, secure, and scale your product with confidence.

A Proof of Concept (PoC) is a small experiment used to test whether a specific technical idea is feasible (for example, “Can this AI accurately summarize this dataset?”). An MVP, by contrast, is a functional product designed to test whether the market actually wants the solution. PoCs are typically internal and intentionally lightweight. An MVP, however, must be stable enough for real users to interact with and provide meaningful feedback.

MVP costs vary widely, but a custom and scalable build typically ranges from $75K to $1205K+ depending on complexity. While no-code or AI-generated solutions may reduce upfront cost, investing in sound architecture early can prevent expensive rebuilds as your product grows.

The right approach depends on what you’re trying to validate. No-code tools are ideal for rapid experiments, Proof of Concept (PoC) and simple smoke tests. But products that rely on complex data models, security requirements, or custom integrations often benefit from a custom tech stack.

Building a successful MVP requires strategic restraint. It is tempting to delay a launch until the interface feels polished or the feature set seems complete, but in software development, completeness often slows down the most important goal: reaching the market and learning from real users.

Successful MVPs do not rely on complexity to impress users. Their strength lies in shortening the feedback loop. By focusing on a single core problem, managing technical debt, and avoiding rushed “vibe-coded” solutions without a scalable plan, founders can protect their runway and iterate based on real data rather than intuition.

At the earliest stage of a product’s lifecycle, the most valuable metric is how quickly a team can learn. Starting small and engineering with intention allows founders to build not just a product, but also a foundation for product–market fit.

If you are on the verge of creating a product, let us assist you with top-notch guidance; schedule a consultation.

You might also like:

Subscribe to our newsletter.

In SaaS, your Minimum Viable Product (MVP) isn’t meant to be perfect; it’s meant to be proven. An MVP is the leanest version of your product that...

A Minimum Viable Product (MVP) is an early software application or app version that includes only the core features needed to solve a specific user...

The journey from a promising idea to a functioning digital product is often misunderstood, especially by first-time founders or businesses entering...

Post

Share